AI Candidate Screening: Designing a Hybrid Hiring System

Recruiting teams now operate under a mandate of absolute visibility, requiring them to reduce time-to-fill while maintaining fairness and high-fidelity reporting. As application volumes rise, many organizations deploy generic AI candidate screening agents to absorb the manual triage workload.

However, the bottleneck in high-volume hiring has shifted beyond simple resume review. The true constraints are fragmented data, inconsistent evaluation criteria, and a blurred line between execution-based tasks and strategic human judgment.

When these structural foundations are unstable, AI candidate screening does not resolve system inefficiencies rather scales them.

Deploying AI agents into a fragmented environment accelerates "process drift" and increases operational risk.

To move beyond mere automation, organizations must address why AI screening fails in the absence of orchestration. In this blog, we’ll discuss how it requires a shift toward a coordinated system where digital execution and human expertise operate within a single, disciplined framework.

High-Volume Hiring Challenges Corporate Teams Face

When hiring volume increases, operational complexity scales faster than human capacity. It often creates the illusion of a headcount problem, yet the root cause is typically a failure of orchestration. Without a disciplined system of work, high-volume cycles degrade into reactive firefighting, where speed is prioritized over the integrity of the hiring decision.

1. Hundreds Of Applications Per Role

High application volume exposes every structural weakness and manual workaround within a recruiting process. When hundreds of candidates apply for a single position, resumes cease to be reliable indicators of capability. They become inconsistent data points that create significant noise within your system of record.

Most leaders misdiagnose this as a staffing shortage, but it is fundamentally a failure of Workforce Orchestration.

To maintain velocity, recruiting teams are often forced into compressed evaluation windows. This operational drag introduces a critical risk vector called Subjective Variability. When a system lacks a disciplined evaluation framework, one recruiter may identify a candidate as a potential fit while another rejects them.

This inconsistency is the enemy of enterprise governance and prevents the hiring engine from producing predictable, high-fidelity results.

Furthermore, many organizations suffer from an over-reliance on external platforms like LinkedIn as their primary talent database. This "platform dependency" means internal data, the candidates already within your Salesforce environment, remains stagnant and underutilized.

With Asymbl’s AI-driven screening, teams can expand their reach beyond external silos. By leveraging digital labor to ingest and structure data from multiple platforms alongside your existing talent pool, you transform Salesforce from a passive archive into an active, cross-functional intelligence engine.

2. Limited Recruiter Capacity

In every recruiting function, there is a fundamental tension between two worker classes: the work of execution and the work of judgment. Most organizations fail to scale because they treat these as a single bucket of "recruiter tasks." However, they are two distinct modes of labor that require different levels of governance and orchestration.

The execution work is the "robotic" layer of the hiring lifecycle. It is high-volume, repeatable, and requires deterministic execution:

- Triage and resume parsing

- Interview scheduling and coordination

- Candidate follow-up and routing

- Logging status updates in the System of Record

Judgment work is where your humans provide their highest value. It is the work of wisdom, context, and ethical oversight:

- Defining success criteria with business leaders

- Calibrating hiring manager expectations

- Evaluating "contextual outliers" who don't fit a standard template

- Protecting candidate trust and identifying systemic bias

When hiring volume spikes, execution work cannibalizes the calendar. Despite an explosion of "efficiency tools," your recruiters’ judgment work becomes rushed, delayed, or skipped entirely.

Adding more "point solutions" to this mess only increases the noise. Without a clear division of labor between human and digital workers, your system of work will continue to degrade. You are losing the ability to make defensible, high-quality hiring decisions.

3. Pressure To Reduce Time-To-Fill

The demand for speed in corporate recruiting is relentless, but speed is a dangerous proxy for progress. Most talent teams are caught in a Productivity Paradox. They are running faster than ever, yet their actual business impact has plateaued.

It is because they are chasing "time-to-fill" as a metric of activity rather than an outcome of deterministic execution.

When you compress hiring cycles without a coordinated system, you aren't just hiring faster but increasing variance. In high-volume recruiting, speed without governance is a risk vector. It forces recruiters to prioritize "clearing the queue" over extracting high-fidelity signals from candidates.

This tradeoff is rarely visible on day one. It shows up six months later as attrition, role misalignment, or a "zombie" workforce that lacks the motivation to perform.

Without an orchestrated handoff between human judgment and digital execution, fast hiring simply accelerates your rate of failure or you might hit your time-to-fill targets, but you’ll do so by sacrificing the very data integrity and auditability required to scale a modern hiring workflow.

4. Inconsistent Candidate Experience

When you ask a human team to maintain a personal touch across thousands of applications without a coordinated workforce infrastructure, you are setting them up for a high-visibility failure.

Most recruiting stacks are a patchwork of disconnected point solutions. In this environment, every handoff is a potential "leak" in your candidate funnel. Delays are the result of data sitting in silos. When a candidate receives inconsistent messaging or faces long gaps in communication, it is an orchestration failure.

We often think of trust as a "soft" feeling, but in a competitive talent market, it is a hard conversion metric. If your process requires perfect human coordination to feel human, it will inevitably break under the weight of volume.

When trust decays, your "silver medalist" candidates, high-quality talent you’ve already vetted, disappear from your ecosystem because the system wasn't designed to retain them.

5. Bias, Compliance, and Legal Exposure

While AI screening tools are often marketed as objective, they are inherently trained on historical data sets that may reflect and amplify legacy hiring biases.

Without a disciplined orchestration layer to provide oversight, what is intended to be a speed solution can quickly evolve into a source of automated discrimination and systemic enterprise risk.

The legal reality is that organizations remain fully liable under Title VII for disparate impacts created by their automated tools, regardless of whether the algorithm was developed internally or purchased from a third-party vendor. This liability is compounded by the "black box" nature of many generic AI systems.

In an unspecialized environment, the lack of a clear audit trail makes it nearly impossible to justify specific rejection logic or demonstrate the criteria applied during a regulatory inquiry.

The regulatory landscape is tightening globally: New York City mandates bias audits for automated employment decision tools, California requires the retention of automated decision data, and the EU AI Act classifies these systems as high-risk.

Furthermore, the emergence of deepfake interviews and identity fraud in high-volume pipelines introduces new layers of accuracy risk and potential FCRA violations.

When digital labor operates without a structured "human-in-the-loop" framework, the organization loses the ability to provide necessary oversight at critical decision points. Bias, transparency, and fraud detection are not merely compliance checkboxes. They are fundamental design requirements.

If an AI screening system cannot withstand regulatory scrutiny or preserve human accountability within the primary system of record, it is not scaling the hiring engine efficiently.

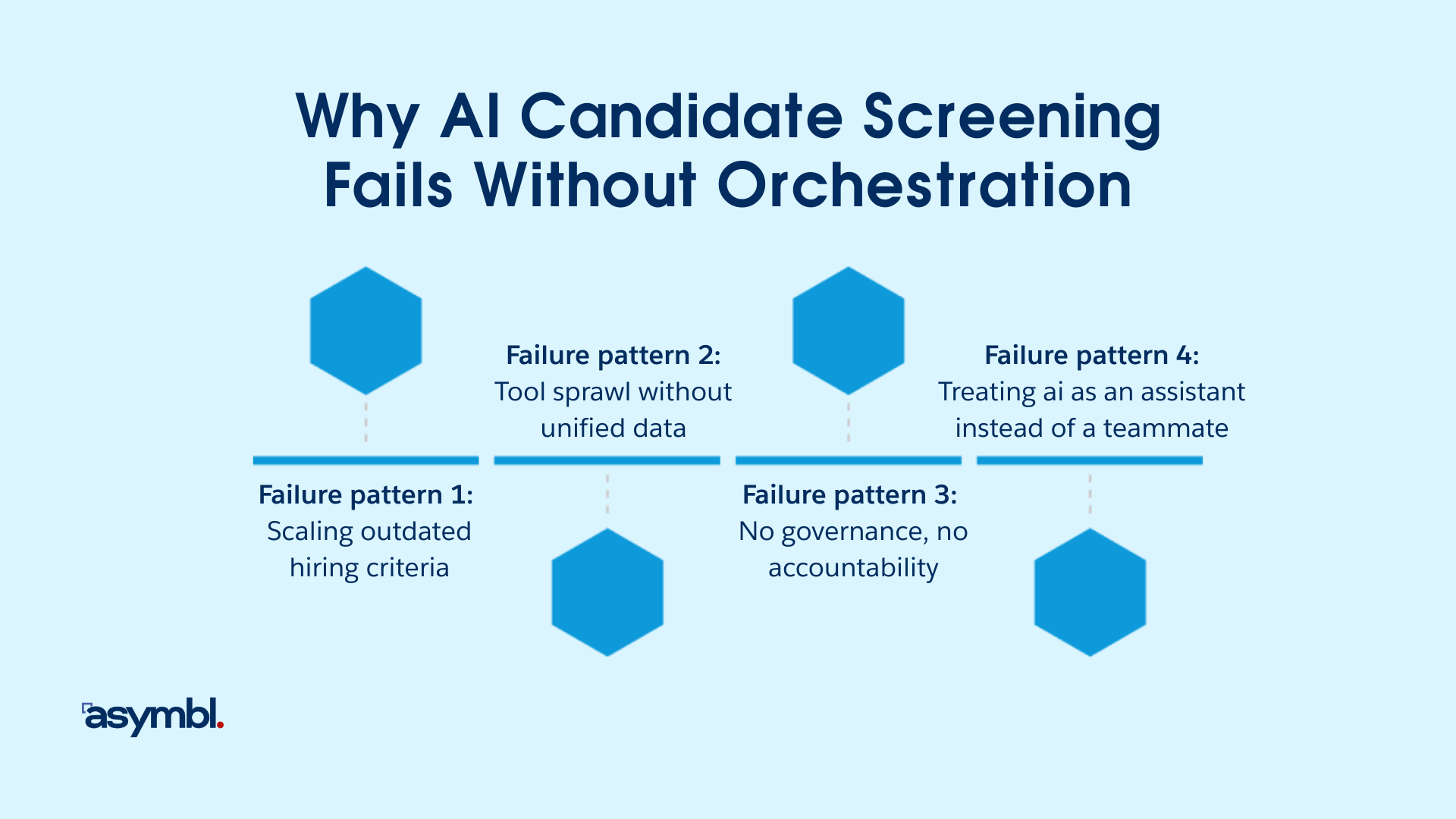

Why AI Candidate Screening Fails Without Orchestration

Most AI candidate screening conversations are stuck at the surface. Executives ask about "model capability"

- Can it parse faster?

- Can it rank better?

- Can it reduce manual review?

These are the wrong questions. They treat AI as a task upgrade, a digital band-aid for a capacity problem.

At Asymbl, we’ve seen this lead directly to the Productivity Paradox, where organizations layer intelligent technology onto fragmented workflows and wonder why their actual hiring outcomes have plateaued.

The SIGNAL Loop

A screening engine holds only when six elements stay aligned:

- S - Success Criteria Stay Current: Hiring criteria must reflect current business outcomes, not historical proxies. If the organization has not redefined what “good” looks like, AI scales outdated assumptions.

- I - Inputs Stay Unified: Screening depends on clean, unified data across sourcing, applications, interviews, and outcomes. Fragmented architecture produces fragmented intelligence.

- G - Governance Stays Explicit:

- Who owns rejection decisions?

- Who monitors adverse impact?

- Who defines acceptable error thresholds?

If these answers are unclear, AI becomes an opaque filter.

- N - Negotiation Stays Human: Hiring is not purely deterministic. Edge cases, nonlinear candidates, and leadership potential require contextual interpretation.

If humans are removed from decision inflection points, quality erodes. - A - Accountability Stays Measurable: Digital systems must have KPIs. If no one measures screening accuracy, bias drift, or downstream performance impact, the system cannot improve.

- L - Learning Stays Continuous: Screening must adapt as roles evolve, markets shift, and organizational priorities change. Without structured feedback loops, AI ossifies.

When one element of SIGNAL breaks, AI accelerates the wrong thing. It scales outdated criteria. It formalizes fragmented data. It institutionalizes unclear governance. The result is pilot optimism followed by executive skepticism.

Failure Pattern 1: Scaling Outdated Hiring Criteria

Most AI screening initiatives are sold as a way to solve for capacity. The hidden danger is that AI is a mirror, not a visionary. It learns from what your organization has rewarded historically.

If your past hiring decisions were driven by narrow credential bias, inconsistent manager "vibes," or legacy role designs, your AI will simply institutionalize those flaws at a thousand times the speed of a human.

Executives treat AI as an IT project, a tool to be "turned on,” rather than a workforce initiative.

If you haven't redefined what "good" looks like based on current business outcomes, your digital workers will continue to hunt for proxies (like specific degrees) that no longer correlate to performance. Speed without governance is just a faster path to a mediocre workforce.

Failure Pattern 2: Tool Sprawl Without Unified Data

Most corporate recruiting environments are a patchwork of disconnected point solutions, including an ATS, a CRM, sourcing tools, and assessment platforms all operating in silos. Layering AI into this fragmented architecture creates a dangerous "Partial Truth" trap.

When AI operates on fragmented data, its scoring is fundamentally compromised. Context disappears between stages. A high-match score in a screening tool means nothing if it isn't reconciled with the revenue and performance data living in your System of Record. This creates what we call "Talent Tech Debt."

AI cannot compensate for a broken architecture. If your AI tools cannot see the full lifecycle of a candidate, they cannot provide deterministic execution. You end up with "Zombie Agents" that execute tasks in a vacuum, producing reports that lack credibility and insights that lack business context.

Failure Pattern 3: No Governance, No Accountability

Most organizations deploy AI screening as a tool that performs a task, rather than Digital Labor that performs a role. This creates a dangerous accountability vacuum. When an AI system rejects a candidate, the decision, and the liability, still belongs to the organization.

Without explicit governance, you haven't added efficiency. You’ve introduced a "black box" into your System of Record. It means that your ability to audit, defend, and improve your hiring outcomes has plateaued.

Governance cannot live in a PDF or a compliance handbook. It must be embedded into your Business OS. If your digital workers lack defined escalation logic and human oversight, they become "Zombie Agents,” executing decisions that no one can explain and no one truly owns.

Algorithmic fairness is an employment requirement, not a technical suggestion. If your AI leads to disparate impact or disability discrimination, "the tool did it" is not a defense.

To move from digital chaos to operational control, your system must define clear decision rights:

- Who "coaches" the digital worker when it drifts?

- What is the threshold for human intervention?

- How is bias monitored in real-time within your Salesforce workflow?

If you aren't managing your digital labor with the same rigor as your human teammates, you aren't orchestrating a workforce but managing a liability.

Failure Pattern 4: Treating AI As An Assistant Instead Of A Teammate

Executives often fall into the trap of positioning AI as a "helper" or "assistant" within the recruiting workflow. This is a fundamental mistake in Workforce Design.

Assistants suggest and teammates execute. The AI doesn't "own" the outcome, your human recruiters either over-trust its recommendations, or more commonly, ignore them entirely.

Both outcomes are fatal to performance. You’ve added an assistant to save time, but your recruiters are now spending their day verifying the AI’s work. You haven't added capacity, you’ve added a new layer of "Execution Work" (manual verification) that cannibalizes their "Judgment Work."

Digital Labor must be managed with the same rigor as human staff. A screening engine is not a "tool,” it is a digital worker performing a defined role within your System of Record. Without a formal job description, clear KPIs, and a dedicated manager, your AI becomes a "Zombie Agent.” It continues to process candidates, but it produces nothing but noise.

To achieve efficiency, your AI teammates need a coaching loop. Just as you wouldn't hire a human recruiter and never provide feedback, you cannot deploy AI screening without a structured path for optimization.

If the AI is not integrated into your Business OS with clear decision rights and escalation logic, it is a liability, not an asset.

It is the intentional design of a Hybrid Workforce where digital workers execute the robotic tasks of triage and matching, allowing humans to focus on high-stakes judgment and relationship building. Without this structure, your AI initiative is just expensive noise.

The Right Solution: Workforce Orchestration

The fix for high-volume hiring is a fundamental redesign of how recruiting happens. Most corporate teams are stuck in the loop, trying to solve a capacity problem with more software.

However, you cannot automate your way out of a fragmented process. You must move from tool adoption to Workforce Orchestration.

Designing the Hybrid Operating Model

Workforce orchestration is the intentional design and management of a hybrid workforce where human and digital workers operate together as a coordinated system. It is a strategic framework built on the following pillars:

- Integrated Design: It is the combination of strategy, process, and technology that allows diverse workers to operate as one, much like instruments in an orchestra.

- A New Structure of Work: It moves beyond treating AI as an IT project or a standalone tool, reframing it as a workforce initiative where digital workers are given specific roles, KPIs, and continuous coaching.

- The Hybrid Model: It establishes a clear "line of scrimmage" where human workers focus on judgment, strategy, and high-value relationships, while digital labor handles repeatable, high-volume execution.

- Operating System Focus: Orchestration is designed to live where work already happens, inside the existing "Business OS" (such as Salesforce), to transform digital chaos into measurable control and revenue

What Is the Digital Workforce?

A Digital Worker is a category of Digital Labor (comprising predictive, generative, and agentic technologies) that is onboarded to execute specific, repeatable tasks at scale. Unlike traditional software, a digital worker operates with a "job description," measurable KPIs, and a governance framework that allows it to work alongside human teammates.

The Digital Workforce is the collective ecosystem of these digital workers when they are integrated into the tools where work already happens (such as Salesforce). It represents a shift from "IT projects" to Workforce Initiatives. In this model, the workforce is hybrid:

- Digital Workers handle Deterministic Execution (the "robotic" work of triage, routing, and data logging).

- Human Workers handle Judgment Work (the "wisdom" work of strategy, empathy, and ethical oversight).

Most AI recruiting automations fail because they are treated as "assistants" rather than "teammates." The three key pillars of a digital worker are:

- Role-Based, Not Task-Based: A digital worker isn't just "parsing a resume.” It is "owning the triage stage" of the recruitment funnel. It has accountability.

- Embedded in the System of Record: For a digital worker to be effective, it cannot live in a silo. It must operate within the System of Record to ensure data integrity and avoid context leaks.

- Managed via the Coaching Loop: Just as human workers need management, a digital workforce requires a feedback loop where humans coach the agents to refine success criteria and eliminate bias.

"Digital workers perform roles. People design outcomes."

By defining the digital workforce this way, leadership moves away from managing a stack of tools and starts managing a coordinated system of intelligent labor that produces predictable, scalable ROI.

The Right Division Of Labor

The plateau in recruiting productivity is not caused by a lack of tools, but by a lack of Workforce Orchestration.

Most corporate teams force their most expensive asset, human recruiters, to act as manual data processors. This is a failure of leadership and infrastructure. Orchestration restores order by establishing a clear "line of scrimmage" between two worker classes, including human judgment and Digital Labor.

The Execution Layer: What Digital Labor Owns

In an orchestrated system, digital labor is assigned the high-volume workload that demands speed, consistency, and absolute auditability.

By moving these tasks to the digital workforce, you achieve the ability to produce the same high-fidelity outcome every time, regardless of volume.

- Extraction of Structured Signal: Digital workers turn unstructured resumes into high-fidelity data points within your System of Record. They don't just parse but score candidates against your explicitly defined success criteria, ensuring every decision is backed by an audit trail.

- Automated Triage and Qualification: Digital workers execute the first-pass qualification checks. They surface "contextual outliers" for human review while routing standard applicants with clear reason codes. This eliminates the "weak signal" problem that plagues manual screening.

- Logistics and Flow Orchestration: Scheduling and workflow routing are pure orchestration tasks. Digital workers manage the handoffs, coordinate calendars, and eliminate the data leaks that occur when a candidate sits in a siloed ATS for days without a status update.

- Trust-Building Communication: Candidate trust is a conversion metric. Digital workers ensure that no candidate falls into a "black hole" by automating follow-ups and routing next steps based on real-time data from your Business OS.

The Judgment Layer: What Humans Must Own

In an orchestrated system, the goal is not to automate humans out of the process, but to move them into the "high-fidelity" work that only they can perform.

When Digital Labor handles the repeatable execution of triage and routing, it creates the capacity for human recruiters to operate at the Judgment Layer. This is the highest-value labor in your organization, the work that turns a "recruiting function" into a strategic growth engine.

- Defining and Calibrating Success Criteria: Leadership cannot be delegated. Humans must define the business outcomes and translate them into the Success Criteria that digital workers follow. This is a dynamic coaching loop. As the market shifts, people must redefine what "good" looks like to ensure the system doesn't ossify.

- Evaluating Contextual Outliers: Digital workers are masters of pattern recognition, but humans are masters of context. Every high-performing team has "nonlinear" candidates, individuals whose resumes don't fit a standard template but whose potential is undeniable. Human Judgment Work is required to see the "why" behind the data, identifying the outliers who represent your next competitive advantage.

- Culture Contribution and Leadership Nuance: Assessing leadership potential and culture contribution is not a data-matching exercise. It is an act of wisdom. It requires the ability to interpret nuance, build relationships, and evaluate how a candidate will impact the team's chemistry. This is the "human-in-the-loop" requirement that ensures quality is never sacrificed for speed.

- Ethical Oversight and Governance: Accountability is a human mandate. Bias monitoring requires people to set the standards, validate the system for drift, and design interventions. The responsibility for algorithmic fairness stays with the employer. In an orchestrated workforce, humans act as the "governors" of the system, ensuring every decision is defensible and equitable.

While digital workers increase the clarity and consistency of the inputs, the final decision stays with people. This ensures that your hiring engine remains a reflection of your organizational values, not just a mathematical output.

The Strategic Shift: From “AI Vs Humans” To “Hybrid Workforce Orchestration”

Corporate teams might be obsessed with a false binary “Will AI replace recruiters?” However, it ignores the fundamental reality that we are entering a new era of Workforce Orchestration. The real shift isn't about replacement but about the emergence of a Hybrid Workforce where human and digital workers are finally organized to solve the Productivity Paradox.

Competitive advantage no longer belongs to the team with the "smartest tools." It belongs to the leaders who can design a coordinated system of Digital Labor and human expertise.

Orchestration: The New Operating Model For Talent

Workforce Orchestration is the intentional design of a system where Digital Labor is onboarded with the same rigor as human staff. This reframes AI screening from a "plug-in" into a core workforce component. When you move beyond local automation wins, your design decisions change:

- Deterministic Execution by Design: High-volume workflows, like triage, routing, and data logging, are assigned to digital workers. This isn't "assistance," but repeatable & predictable execution that ensures 100% consistency across millions of data points.

- Integrated Performance Management: Measurement moves beyond "recruiter activity" to a holistic view of system output. You manage your digital workers with KPIs, coaching loops, and performance benchmarks, just as you would a human team.

- Infrastructure over Fragmentation: Orchestration requires a single System of Record. By embedding digital labor directly into your Business OS (Salesforce), you eliminate the data leaks and context gaps that plague disconnected talent stacks.

- Embedded Governance: In a hybrid model, governance is a part of the operating rhythm. Auditability and bias monitoring are built into the workflow, ensuring that every decision, human or digital, is defensible and transparent.

When you treat recruiting as an operating system problem, it becomes solvable.

From Tool Deployment To Workforce Design

Most AI initiatives stall because they are managed as "deployments,” a software-first approach that leads to pilot progress but production failure.

According to The GenAI Divide: State of AI in Business 2025 Report by MIT’s Nanda Initiative, 95% of generative-AI pilots fail to scale, not because the technology falls short, but because companies treat AI as an IT project rather than a workforce initiative.

When AI is treated as an IT project, it remains siloed from the System of Record. It operates on partial truth, lacking the business context required for Deterministic Execution. This is why pilots feel successful in isolation but crumble when integrated into the high-stakes operating rhythm of a corporate recruiting team.

To break the paradox, leadership must shift from buying tools to "designing a workforce." With Asymbl, you can give your Digital Workforce:

- Explicit Governance: Defining who owns the decision and who monitors for drift.

- Defined Roles: Moving from task automation to assigned segments of the hiring lifecycle.

- Operational Integration: Embedding digital workers directly into your Business OS (Salesforce) so they share the same data as your human recruiters.

Designing Digital Workers As Teammates

Most AI tools currently operate on a "best guess" basis, which is Probabilistic AI. It suggests a match, and a human spends five minutes verifying it. This is the Verification Tax.

With Asymbl, you can design Digital Labor for Deterministic Execution so that the machine doesn't "suggest," it enforces the rules you’ve built into your Business OS.

To transition from a tool license to a Hybrid Workforce, your digital workers must be integrated via a Structural Protocol:

- The Service Level Agreement (SLA) for Labor: A tool has uptime. A teammate has an SLA. You must define the success criteria for your digital workers within your System of Record (Salesforce). If the digital worker is responsible for the "Initial Triage" role, its performance is audited against the quality of the high-match signals it passes to the human layer. This transforms the AI from a background process into an accountable participant.

- Contextual Sovereignty: The "Assistant Trap" happens when AI doesn't know the history of the business. A true teammate shares your Institutional Memory. By embedding digital labor where work already happens, they gain "Contextual Sovereignty,” access to the revenue signals, past interview feedback, and silver-medalist data that live in your Salesforce environment. Without this context, your AI is just an expensive stranger.

- The Exception Management Layer (Governance): In an orchestrated workforce, the human's most critical role is Exception Management. You design the "Line of Scrimmage" so that the digital worker executes 95% of the robotic, high-volume routing. When the system encounters a Contextual Outlier, a candidate with non-linear experience or high leadership potential that defies the pattern, it triggers an escalation. This is where human judgment is most valuable.

- The Calibration Rhythm (Coaching): Static automation is the enemy of growth. A digital workforce requires a coaching Loop, where human operators review the reason codes provided by the digital worker. If the market shifts or a specific department's needs change, the human "re-calibrates" the worker's logic. This ensures your Workforce Orchestration evolves at the speed of your business, not at the speed of a software update.

The "Teammate" framework is about System Integrity. It is the realization that you cannot scale a human-only team against modern volume, and you cannot trust a tool-only team with your culture. The only path forward is the intentional design of a Hybrid Workforce where the machine owns the volume and the human owns the wisdom.

The debate regarding AI replacing human recruiters ignores the fundamental requirement of modern talent acquisition: architectural resiliency. The critical question for leadership is not about the ratio of humans to machines, but whether your hiring system is built to manage volume, scrutiny, and complexity without operational failure.

Bias mitigation, auditability, and fraud detection are not merely defensive obligations but the primary indicators of a robust and mature hiring engine.

Scaling a human-only model against modern application volumes is no longer feasible, yet relying on a machine-only model compromises culture, nuance, and accountability. The solution is the orchestration of coordinated labor.

Within this framework, digital workers manage deterministic execution, such as high-frequency screening, scheduling, and data logging, while human experts retain ownership of strategic judgment, stakeholder calibration, and final oversight.

When this coordination occurs natively within your system of record, institutional risk is transformed into operational control. Transparency is no longer a compliance hurdle but becomes the foundation for signal quality.

By moving beyond siloed AI tools toward a hybrid workforce orchestration model, AI ceases to be a risk multiplier and becomes a signal amplifier.

Stop managing static records and start orchestrating a hybrid workforce. See how Asymbl unifies human judgment and digital labor on a single enterprise foundation. Book a demo today.

Legacy ATS Limitations: Why Your Recruiting System Is Holding You Back

The ATS platforms are not fundamentally flawed. They’re simply not built to support today’s dynamic recruiting environment. As strategies shift to emphasize early engagement, automation, and cross-functional coordination, the supporting systems must shift too.

.webp)